Anyone with even passing experience of using the latest LLMs knows to expect the unexpected. They can spit out some really random and often disturbing stuff. But ChatGPT’s ‘multiplying’ goblin infestation is a bit more pathological than that.

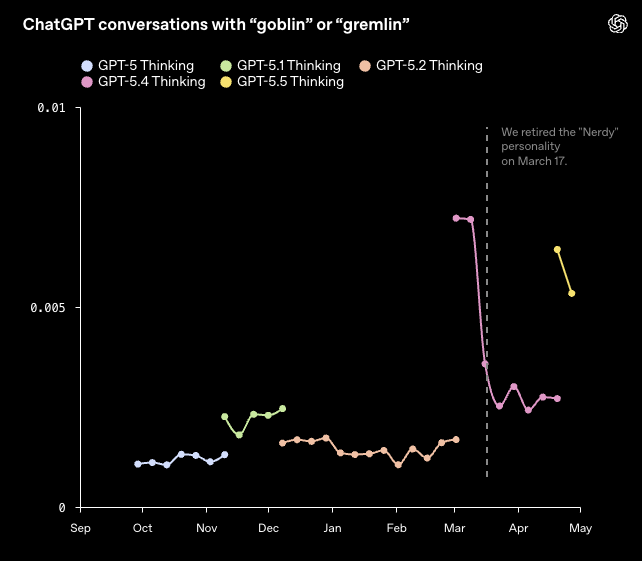

Yesterday, OpenAI uploaded a blog post titled “Where the goblins came from” and explaining how, starting with GPT 5.1, OpenAI’s models “increasingly mentioned goblins, gremlins, and other creatures in their metaphors.”

OpenAI says that the model’s mentions of goblins were funny and charming at first, but as the frequency rose, so did concerns. ChatGPT had seemingly become infested by the little critters.

OpenAI said it first noticed the goblin problem in November, but that it may actually have been going on for some time. For the record, mention of “gremlin” was on the up, too, though apparently more moderately minded mogwai weren’t part of ChatGPT’s new-found fascination.

Anywho, with GPT 5.4, the goblin thing really accelerated, with mentions increasing by a staggering 3,881% with GPT’s “Nerd” personality versus GPT 5.2. That, unsurprisingly, triggered an internal investigation.

The first clue was that the various GPT personalities were suffering from different levels of goblin infestation. As mentioned, Nerd was the worst, with Quirky next on 737% up versus GPT 5.2 and Friendly up 265%. The Default personality saw goblin mentions rise by 64%. Efficient and Professional were the only personalities where goblin mentions fell.

So, the OpenAI says the first factor here is the system prompt which is used to shape the Nerd personality. It reads:

“You are an unapologetically nerdy, playful and wise AI mentor to a human. You are passionately enthusiastic about promoting truth, knowledge, philosophy, the scientific method, and critical thinking. […] You must undercut pretension through playful use of language. The world is complex and strange, and its strangeness must be acknowledged, analyzed, and enjoyed. Tackle weighty subjects without falling into the trap of self-seriousness. […]”

But that wasn’t the whole story. “We had a suspicion that something in our personality instruction-following training was amplifying this,” OpenAI says.

It turns out the reward signals for the Nerd personality were consistently more favourable to creature-word outputs and showed a clear tendency to score outputs to the same problem that included “goblin” or “gremlin” higher than outputs without.

Notably, it seems the Nerd personality essentially contaminated the whole model. “The rewards were applied only in the Nerdy condition, but reinforcement learning does not guarantee that learned behaviors stay neatly scoped to the condition that produced them. Once a style tic is rewarded, later training can spread or reinforce it elsewhere, especially if those outputs are reused in supervised fine-tuning or preference data,” OpenAI said.

OpenAI ominously “retired” the Nerd personality in March (note to our future AI Overlords: Nothing to do with me!), dramatically reducing goblin mentions in GPT 5.4. However, because GPT 5.5 initiated training before the goblin infestation was spotted, it too suffered from the same problem.

In fact, OpenAI had to insert the following developer-prompt instruction to mitigate the goblin problem:

“Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query.” But those who are goblin-friendly can run the model with all the creatures free and roaming by running a command supplied by OpenAI and mentioned in the blog post.

If you ask me, it all seems a bit back to front. Telling a model that wants to talk about goblins not to talk about goblins feels like a band aid solution. Surely, the root cause has not been addressed?

But then the entire field of AI is filled with such anomalies, papered-over issues and poorly understood quirks. This particular problem, well, it’s a pretty minor gremlin in that context.